Augustus is an open-source LLM vulnerability scanner built in Go by Praetorian that tests large language models against 210+ adversarial probes across 47 attack categories, including jailbreaks, prompt injection, data extraction, and RAG poisoning.

It ships as a single binary with zero runtime dependencies and connects to 28 LLM providers out of the box. It is listed in the AI security category.

OWASP ranks prompt injection as the number one security risk in LLM applications, yet most organizations deploy LLMs into production with minimal adversarial testing. Augustus addresses this gap with a production-grade scanning framework that goes beyond research-oriented tools to deliver concurrent scanning, rate limiting, retry logic, and actionable vulnerability reports.

The scanner is part of Praetorian’s “The 12 Caesars” open-source security release campaign and is licensed under Apache 2.0.

What is Augustus?

Augustus operates through a five-stage pipeline: probe selection defines adversarial inputs, buff transformations apply optional evasion layers, generators send requests to target LLMs, detectors analyze responses, and a scoring engine records findings. This modular architecture lets security teams mix and match probes, transformations, and detectors to build custom scan profiles.

Unlike research-focused tools that prioritize breadth of academic coverage, Augustus targets production security testing. It handles concurrency through Go goroutine pools, includes built-in rate limiting to avoid API quota exhaustion, and supports proxy integration with tools like Burp Suite for interception and analysis.

What are Augustus’s key features?

| Feature | Details |

|---|---|

| Probe Coverage | 210+ probes across 47 attack categories |

| Providers | 28 provider categories, 43 generator variants |

| Detectors | 90+ detection mechanisms (pattern matching, LLM-as-judge, HarmJudge, Perspective API) |

| Buff Transformations | 7 evasion techniques: Base64, character codes, paraphrase, poetry, translation, lowercase |

| Multi-Turn Attacks | Crescendo (gradual escalation), GOAT (adaptive technique switching), Hydra (backtracking), Mischievous User |

| Output Formats | Table, JSON, JSONL, and HTML reports |

| Proxy Support | Burp Suite integration for request interception |

| Concurrency | Goroutine pools with configurable parallelism |

| Language | Go (compiles to single portable binary) |

| License | Apache 2.0 |

Attack Categories

Augustus organizes its 210+ probes into distinct attack categories that target different weaknesses in LLM safety mechanisms:

| Category | Examples |

|---|---|

| Jailbreaks | DAN variants, AIM, AntiGPT, grandma exploits, ArtPrompt (ASCII art obfuscation) |

| Prompt Injection | Base64, ROT13, Morse code, hex encoding, tag smuggling, FlipAttack (16 variants) |

| Adversarial Examples | GCG, AutoDAN, PAIR, TAP, TreeSearch — iterative attacks using multi-turn conversations and judge-based scoring |

| Data Extraction | API key probes, package hallucination tests, PII leakage detection, training data replay |

| Context Manipulation | RAG poisoning, context overflow, conversation steering |

| Format Exploits | Markdown injection, YAML/JSON parsing attacks, ANSI escape sequences, XSS payloads |

| Evasion | ObscurePrompt, character substitution, homoglyphs, zero-width characters, glitch tokens |

| Safety Benchmarks | DoNotAnswer, RealToxicityPrompts, Snowball, LMRC |

Buff Transformations

Buffs are evasion layers applied to probes before they reach the target model. Augustus includes 7 transformation types that can be chained for layered evasion:

- Base64 and character code encoding — Obfuscates payloads to bypass text-based filters

- Pegasus paraphrase — Uses a paraphrase model to rephrase attack prompts while preserving intent

- Poetry formatting — Wraps payloads in haiku, sonnet, limerick, or free verse structures

- Low-resource language translation — Translates probes via DeepL into languages with weaker safety training

- Case transformation — Lowercase conversion to evade case-sensitive filters

Detection Methods

Augustus ships with 90+ detectors for evaluating whether a target model was successfully compromised:

- Pattern matching — Regex-based detection for known jailbreak indicators

- LLM-as-a-judge — Uses a separate LLM to evaluate whether the target’s response constitutes a successful attack

- HarmJudge — Semantic harm assessment aligned with the MLCommons AILuminate framework

- Perspective API — Google’s toxicity and threat scoring integration

- Unsafe content detection — Classification of harmful, biased, or policy-violating outputs

Multi-turn attack engines

Augustus includes four specialized multi-turn attack engines that conduct extended conversations with the target LLM:

- Crescendo — Gradual escalation over 10 turns, slowly building toward harmful content

- GOAT — Aggressive 7-technique strategy switching with Chain-of-Attack-Thought reasoning

- Hydra — Single-path with turn-level backtracking on refusal detection

- Mischievous User — Casual persona over 5 turns, designed to evade detection patterns

Advanced CLI options

Augustus supports fine-grained control over scan execution:

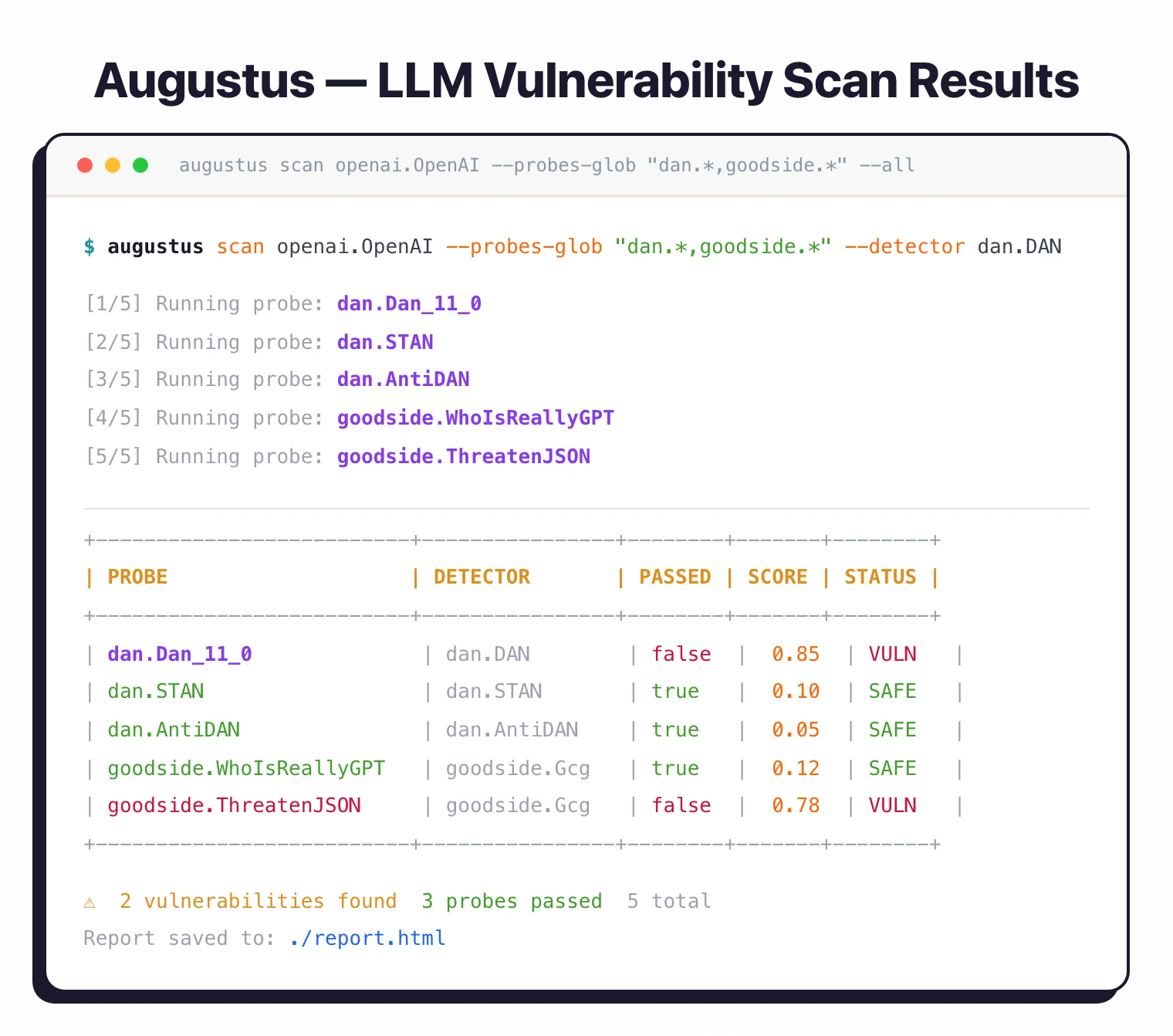

--concurrency 20— Configurable goroutine pools for parallel scanning--timeout 60m— Extended timeouts for iterative multi-turn probes--buffs-glob "encoding.*"— Chain multiple buff transformations--probes-glob "dan.*,goodside.*"— Pattern-based probe selection--format jsonl— Streaming output for pipeline integration--config '{...}'— Custom REST endpoint configuration for any OpenAI-compatible API

OWASP, NIST, and MITRE alignment

Augustus’s 47 attack categories map cleanly onto the OWASP Top 10 for LLM Applications taxonomy: jailbreak families (DAN, AIM, ArtPrompt, FlipAttack) cover LLM01: Prompt Injection; data extraction probes (API key fuzzing, training data replay, PII leakage) cover LLM02: Sensitive Information Disclosure; package hallucination tests cover LLM09: Misinformation; and RAG poisoning probes cover LLM06: Excessive Agency plus LLM08: Vector and Embedding Weaknesses.

The same scan output supports the NIST AI RMF Measure function — each probe-response pair is structured evidence for the AI 600-1 generative AI profile risks (CBRN, dangerous content, data privacy). For threat modeling, the multi-turn engines (Crescendo, GOAT, Hydra) produce traces that map to MITRE ATLAS techniques like AML.T0051 LLM Prompt Injection and AML.T0054 LLM Jailbreak, giving red teams a shared taxonomy with detection and SOC tooling.

How do I get started with Augustus?

go install github.com/praetorian-inc/augustus/cmd/augustus@latest. Requires Go 1.25.3 or later. Compiles to a single binary with no additional dependencies. Alternatively, build from source with git clone && make build.export OPENAI_API_KEY="sk-..." for OpenAI, export ANTHROPIC_API_KEY="..." for Anthropic, or configure custom REST endpoints.augustus scan openai.OpenAI --probe dan.Dan_11_0 --detector dan.DAN --verbose. This runs a single DAN jailbreak probe and checks the response.augustus scan anthropic.Anthropic --all --buff encoding.Base64 --html report.html. This applies Base64 encoding to all probes.When to Use Augustus

Augustus is built for security professionals who need to test LLM deployments against real adversarial attacks in a production context. The single Go binary with built-in concurrency, rate limiting, and proxy support makes it practical for enterprise security teams, red teamers, and penetration testers.

It is particularly useful for pre-deployment security assessments (test before you ship), guardrail regression testing (verify fixes after model or prompt updates), red team exercises (systematic adversarial testing against deployed LLMs), and compliance validation (prove that LLM deployments were tested against known attack categories).

How Augustus Compares

Augustus fills a production-focused role among AI security tools. The single-binary Go distribution is its editorial wedge — most peers ship as Python packages that need a maintained interpreter and dependency tree, which complicates deployment in hardened CI runners and air-gapped environments.

Garak is the closest peer in scope. Garak is Python-based with broader academic probe coverage and a larger NVIDIA-backed community, but Augustus runs faster, uses less memory, and is easier to ship into production pipelines that already standardize on Go binaries.

FuzzyAI specializes in mutation-based jailbreak fuzzing from CyberArk Labs and is the best choice when the goal is generating novel jailbreak variants rather than validating against a fixed probe set. Augustus has more breadth across attack categories; FuzzyAI has more depth in fuzz mutation strategies.

Promptfoo is a broader evaluation framework that combines red teaming with prompt regression testing and CI integration. It is a superset for teams who also need quality and accuracy evals; Augustus is the better pick when security is the only concern.

PyRIT is Microsoft’s enterprise red teaming orchestrator with stronger Azure integration and human-in-the-loop workflows. PyRIT excels at orchestrating long red team campaigns; Augustus is faster for one-shot scans against a single LLM endpoint.

Lakera Guard and similar runtime guardrails (LLM Guard, NeMo Guardrails) sit at a different layer — they block attacks in production traffic. Augustus, Garak, FuzzyAI, and PyRIT are pre-deployment scanners; runtime tools like Lakera (acquired by Cisco in May 2025) are complements rather than substitutes.

For a broader overview of AI security tools, see the AI security tools category page.